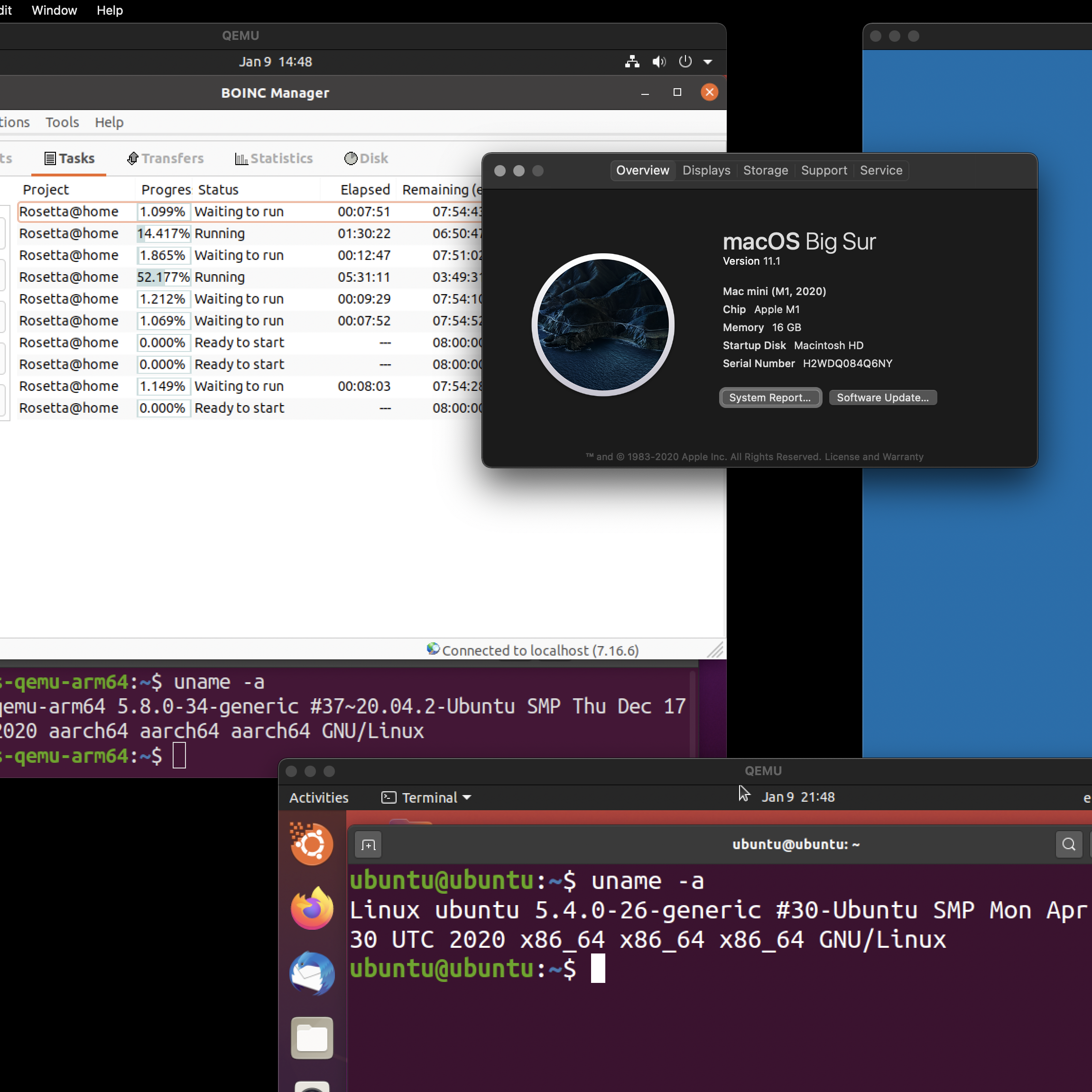

Higher end models burn even more say 200 - 300 watts, that would need a large power supplyĪnd it would be necessary to run it in an environment that can remove all that heat Gpu runs *hot* *very hot* burns power like aluminium smelters a nvidia 1070 gt easily burns upwards of 150 watts and Hence there would be more people who can participate in cpu projects Most people has at least a cpu a pc that is and many are running a recent haswell, skylake, kabylake, ryzen and later cpu it is a tradeoff of sorts and a cost - benefit tradeoff My guess is the same would happen should rosetta choose to go the gpu routeīut nevertheless it is true gpu could optimise and speed up hundreds of times apps designed that way say vsĪ conventional cpu. The alternative gpu codes 'doesn't exist' especially for the complicated neural net layers and modelsĮven for that matter switching to a 'cpu only' build is difficult as there really isn't a easy way to translateĪll that cuda codes back to a cpu based one Today if you want to run tensorflow or caffe on amd gpus you find that you are *stuck* with nvidia Tensorflow and caffe (pytorch) are built and designed around cuda codes

The insight comes from deep learning, which use a lot of cuda codes researchers and developers may find it much harder to do new protein algorithms Gpu use may need redesign of the code base a very expensive (in terms of man-effort) task and makes it 'brittle' World Community Grid doesn't have GPU clients for most projects.

GPUs are basically massive parallel execution FPUs, so yes, they can calculate the 3D positions of protein molecules and even make some physics, for sure nVidia GPUs, but I think AMD ones too, so the people that are verbally attacking you because because they're covering for the ineptitude of this project, so I'd recommend going with if you have a recent enough GPU, it's also protein folding, so you'd be helping scientist with it. Yes and well done projects like have both CPU+GPU clients, but it won't happen on this project because is a marely functioning mediocre one unfortunately, not enough developers or they don't know much what are they doing, is huge in RAM and Storage, it's not responsive, specially the Android client, unless you have a really fast SoC on your phone, have lots of WU shortages, etc, Android client looks like it's being developped but still WCG was always good at keeping the WUs relatively small and if not "light", responsive and not needing much RAM so I think is huge on RAM and Storage is because it's not well developed, probably never was, now must be a nightmare to do it, anyway, this happened on a Optical recognition of Cancer Cells in WCG, each WU took at least 6 hrs, up to 12, typically 8-10 hrs, so a guy came that new about the field of study and had experience in Distributed Computing and made a lot of optimizations, even the results were more precise, most WUs took 2 hr, so this doesn't happen here, probably never will, I have complained many times about different issues and how they don't even put enough WUs on the Queue, and all I got were empty promises that will never happen again, also made suggestion and they just never even said thank you.

I'd like to see a GPU Client for is MUCH, MUCH, MUCH, MUCH, MUCH, MUCH faster than CPU.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed